LLMs¶

KARLI-hosted chat models are exposed as one provider among the standard set (OpenAI, Anthropic, etc.) and can be selected from any component that accepts a model — most commonly the Language Model and Agent components.

Under the hood, the KARLI provider speaks an OpenAI-compatible API: components select a KARLI model the same way they select an OpenAI model, but requests are routed to the KARLI endpoint.

Available Models¶

| Model | Notes |

|---|---|

karli/KARLI-MoE-latest |

Default KARLI chat model; supports tool calling. |

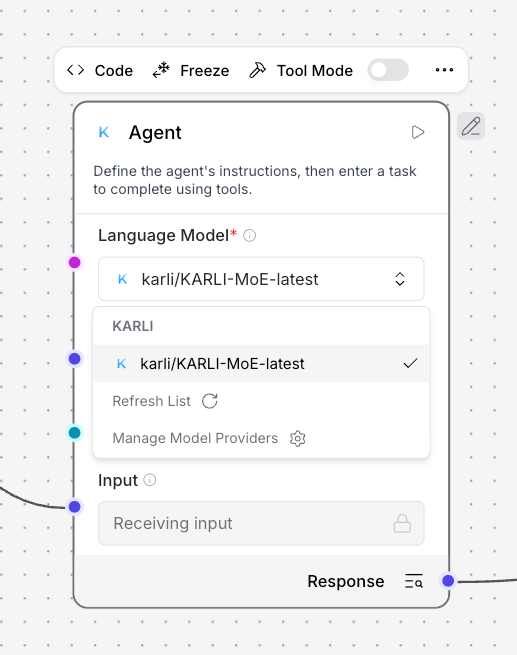

Selecting a KARLI Model¶

Open any model-aware component (e.g. Language Model or Agent), choose KARLI as the provider, and pick a model from the dropdown. The provider surfaces the shared KARLI_API_KEY / KARLI_BASE_URL credentials described in the Models overview.

Provider Filtering¶

Some Agentlab dropdowns are scoped to a specific provider — for example, the extraction-model picker on the Read File component is filtered to KARLI only (see Data Extraction). Broader, deployment-level provider filtering (for data sovereignty or compliance) is enforced by the surrounding Karli Studio platform rather than by Agentlab itself.